One of the main causes for low literacy in America is the archaic mental models that constrain the ways we conceive of, design, and deliver reading instruction.

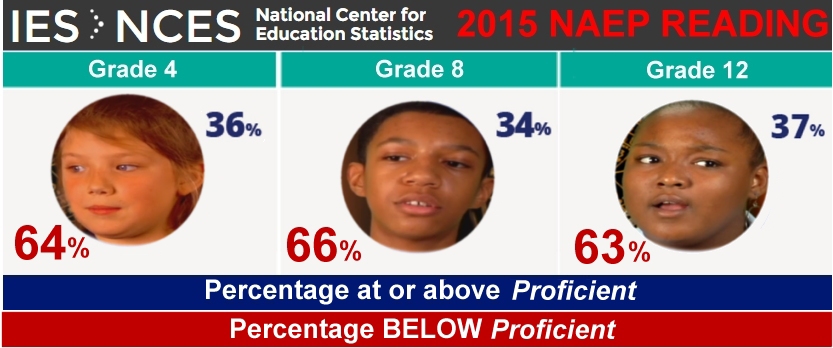

Over half of all the K-12 students in America are less than grade level proficient in reading. The psychological, academic, social, political, and economic costs are staggering. Yet it’s been this way for decades, despite designating ‘grade level reading’ to be a national priority, despite thousands of scientific studies, and despite hundreds of billions of dollars spent on reading instruction. Why? What’s so difficult about learning to read?

Sure, early-life-learning-trajectories that insufficiently prepare children for the challenges (linguistic, cognitive, emotional, and attentional) involved in learning to read certainly make it harder. But why haven’t we been able to overcome those variations with instruction? The prevailing wisdom would say, it’s because teachers don’t understand what is involved in acquiring literacy; they haven’t been trained, or aren’t following the instructional models that are out there.

No!

It is the paradigm common to all those models that perpetuates this crisis. Any child who has even a rudimentary spoken language could learn to read, if met and guided in a way that adaptively scaffolded them up from the language that he or she has. The fault isn’t in the kids, and it’s not the fault of the parents or teachers, either.

The fault lies with thinking of reading instruction through a 15th century ‘static printed text’ model of orthography. Our ‘reading science’ is warped by archaic assumptions about the immutability of the code and, based on those assumptions, locked into thinking about how children’s brains learns to read through the prism of prevailing models of reading instruction.

The Future

Kids in the near future will not have to be ‘taught’ to read. Every interaction with every word on every device will support them learning to read on their own.

Sound far-fetched? Click on any word on this page, and then click it again, and again! You will see and hear what I mean

We will inevitably move to a ‘dynamic digital character’ model of orthography. As we do, simple everyday technology (already installed on smartphones, tablets, computers, and even TV set-top boxes) will make words come alive with everything needed to support kids learning to read them on their own and with far less instruction required.

A Deeper Dive

We only sense now. We only feel now. We only think now. We only learn now. We are naturally ‘wired’ to learn from what is happening on the living edge of now. Humans learn best by differentiating, refining, and extending their participation on the living edge of now.

Reading requires an unnatural kind of learning. Reading (as well as writing, math, and all their abstract, conventional, and technological outgrowths) requires our brains to process information in complexly artificial ways. We learn to move, feel, touch, smell, taste, hear, emote, walk, and talk by reference to the immediate internal feel of learning them. However, in the artificial domain of writing we learn from the external abstract authority of who or what we are learning from and the technological conventions of the medium we are learning through. In natural modes of learning, we learn from immediately synchronous (self-generated) feedback on the edge of participating (i.e., falling while walking). In the artificial modes, (other-provided) feedback can be far out of ‘sync’ with the learning it relates to (for example, reading test results in school that provide feedback far downstream from the learning they measure).

Children who struggle with reading are struggling with an artificial learning challenge. In reading, our brains must process a human invented ‘code’ and construct a simulation of language. This unique form of neural circuitry conscripts the biologically based language processes of our brains to perform in programmably mechanical ways, according to the instructions and information contained in the c-o-d-e. The virtual machinery that must form in our brain to do this is as artificial as a CD player.

The Absurdity of Explicit Abstract Reading Instruction

Can you imagine trying to help a toddler learn to walk by giving them verbal ‘how to’ instructions when they are sitting? Can you imagine trying to teach kids to learn to ride a bicycle without using a bicycle – by trying to teach them through the use of abstract exercises rather than a guiding hand during the real-time live act of trying to ride the bicycle?

All prevailing models of reading instruction share a similar absurdity. They all involve methods of instruction that are abstractly removed from the live act of reading they intend to improve. They are all designed to train learner’s brains to perform unconsciously automatic code-processing operations that will later, when engaged in actual reading, result in fluent word recognition. Why? Because the technology we have used to teach reading has been incapable of interactively coaching and supporting children on the living edge of their learning to read. Unable to respond to learners during the real-time flow of their learning to work out unfamiliar words, we’ve been forced to train them in abstract offline ways.

At Learning Stewards (a 501c3 non-profit), we have turned the process completely upside-down and inside-out. Rather than using abstract training exercises, we have created a technology based pedagogy that is based on instantaneously responding to and coaching learners, word-by-word, whenever they need it.

Our tech provides autonomous learning-to-read guidance and support, which safely and differentially stretches the learner’s mind into learning to decode. Instead of teaching phonics rules and spelling patterns to be later applied (hopefully) to the decoding of unfamiliar words, our tech interactively guides students through the process of working out unfamiliar words, and it teaches them the rules and patterns in the process. With this model, kids learn 3 simple steps that enable them to learn to read (thereafter without the need for any ‘offline’ instruction).

Learning to Read 1-2-3: 1) Click on ANY word. 2) Try to read word in pop-up. Can’t? Click word in pop-up. 3) Repeat. Try it, click on any word in this article.

Every time a student encounters a word that she or he doesn’t recognize, they touch or click it. This brings up a pop-up box containing the word. Clicking on the word in the pop-up results in visual and audible ‘cues’ that reduce and often eliminate the (letter-sound-pattern) confusions in the word. With each click, the cues advance through a consistent series of steps that reveal (where applicable): the word’s segments, long and short sounds, silent letters, letter-sound exceptions, and groupings (blends and combinations). At each step, the student uses the cues to try again to recognize the word. If they can’t, they click again. If all of the cues (seen and heard) after the initial clicks aren’t sufficient to guide recognition of the word, a final click causes the pop-up to animate (visually and audibly) the ‘sounding-out’ of the word and lastly, the playing of the word’s sound as it is normally heard.

Kids in the future will not be explicitly-systematically taught to read any more than they are explicitly-systematically taught to talk. They will learn to read during their every interaction with every word on every device (phones, tablets, computers, tv sets, augmented reality). They will learn to read as a background process pervasively available while they are playing and learning with anything involving written words. All words – all devices – all the time.

Decades of research, thousands of studies, and billions of dollars later: 60+% of U.S. children are still chronically less than grade-level proficient in reading. We are dedicated to ending the archaic, abstract, tedious, precarious, and ineffective (and consequently life maligning) ways we have historically taught reading. It’s time to get our heads out of the past and recognize that learning to read is a technological process, and as such, a process best facilitated by technology.

Because nothing is more important to our children’s futures than how well they can learn when they get there.

Want to understand more? Click here.

Want to see it applied to a wide variety of content for all ages? Click here.

President of Learning Stewards and Director of the Children of the Code Project, David Boulton is a learning-activist, technologist, public speaker, documentary producer, and author. David appeared in the PBS Television show “The New Science of Learning” and in the Science Network’s “The New Science of Educating” broadcast.